Can machine learning detect fake news? How does machine learning detect fake news? Those are interesting question that I was curious about. False news has continually grown around us, usually as clickbait, and it frequently goes viral. These are articles and tales written solely to mislead and misinform people into believing narratives that have no merit in the first place. According to a study, the proliferation of such media might be related to humans being more prone to propagate lies than the truth.

Journalists and legitimate media outlets used to be the critical sources of information since they had to verify the origin and the information they got; however, this isn’t always the case nowadays. With technological breakthroughs, rumour and propaganda mills have turned over to powerful AI algorithms meant to produce believable content—which is typically not real.

The advancement of this technology is a significant step forward from Siri, optical character recognition, or spam filters, and teaching AI to detect and handle enormous volumes of data is a risky venture. The good news is that algorithms for distinguishing between human-generated material and AI-generated material have been created. These algorithms, on the other hand, have the power to generate fake news.

What Is Machine Learning?

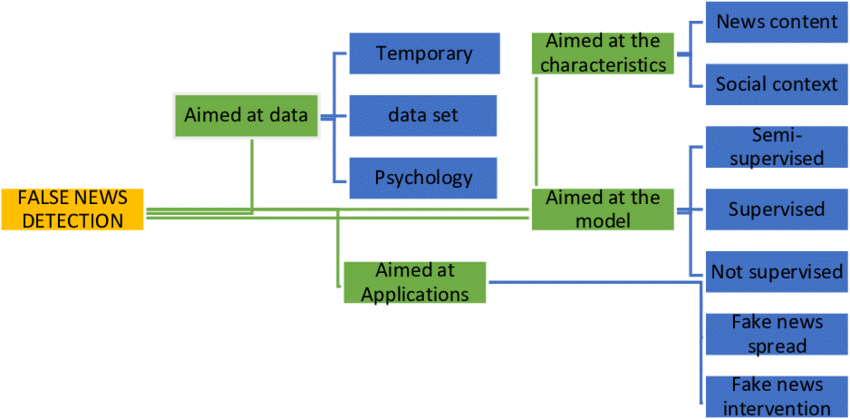

Machine learning is an application of AI that allows a machine to learn without being explicitly programmed. Machine learning makes its inference on data, and it will learn by analyzing different data. There are three forms of learning in machine learning:

- Supervised learning

- Unsupervised learning

- Reinforcement learning

Supervised learning trains the models with labelled data, so the ML system first learns from those labelled before doing the job on unlabeled data. Supervised learning models appear pretty often in fake news detecting research.

Unsupervised learning trains models with unlabeled data, whereas reinforcement learning trains models to punish undesired behaviour and reward desired behaviour.

What Is Fake News?

The fundamental definition of fake news is information that leads people astray. Nowadays, fake news spreads like wildfire, and people share it without confirming it. It is frequently complete to advance or enforce specific beliefs, and it usually accomplishes through political agendas.

To produce online advertising income, media outlets must draw viewers to their websites. As a result, it is vital to recognize fake news.

Are Machine Learning Algorithms Reliable?

While computer algorithms may be less expensive than real-life human editors, the fact is that Facebook still needs to employ humans to edit and assess the stuff it promotes as news—and a lot of them.

Facebook used human editors; however, they were sacked in 2016 after showing that they consistently blocked conservative news pieces from trending topics. However, Facebook has now reintroduced human editors to specific news articles.

Pleasing all audiences will be difficult. The algorithms are biased, and if Facebook hires editors and moderators to double-check algorithm judgments, those editors will be labelled as unjust.

With the sheer volume of data and the pace with which it is appearing, MIT has indicated that the deployment of artificial intelligence techniques might be beneficial. But, artificial intelligence alone is not the answer.

The future will require some mix of human work and AI that finally succeeds in defeating computer propaganda, but how this will happen is just not evident. AI-assisted fact-checking is only one path forward.

Can Machine Learning Detect “Fake News”?

Fake news is not a new concept. The Roman Emperor Augustus launched a disinformation campaign against Mark Antony, a rival politician and general. Throughout the Cold War, the KGB employed deception to boost its political status.

Today, false news is used as a political tactic worldwide, and new technology is allowing individuals to spread it at unprecedented speeds. Artificial intelligence (AI) is one of these recent advancements that can assist journalists in developing a consistent false news detector. Still, AI may also empower others to propagate and even generate new types of disinformation.

Can the machine learning be used to detect fake news? To do so, first address a complex question: what is truth? Rather than addressing this topic directly, scholars and companies attempt to answer simpler forms of it.

Can AI, for example, tell if a machine or a human authored a piece of material?

Yes, it can — to a certain degree of precision.

Techniques that analyze linguistic clues such as word patterns, syntactic structure, readability qualities, and so on can distinguish between human and computer-generated content. Similar feature-based algorithms may use to the difference between synthetic images and actual pictures.

However, recent wins of generative adversarial networks indicate that algorithms may soon mimic people so successfully that the information they generate will be indistinguishable from that caused by people.

Deep neural networks with generative adversarial networks come from two complementing pieces. A generator algorithm takes a series of actual samples — text or pictures — as input and tries to duplicate the patterns to produce synthetic data. In contrast, the discriminator algorithm learns to distinguish between actual and manufactured data.

Both the generator and the discriminator try to outplay each other, and as a result, both continually improve. The approach ends in making a generator capable of producing synthetic samples that are indistinguishable from genuine ones.

The recently released GPT-3 from OpenAI can compose essays, tales, emails, poetry, business memos, technical instructions, and so on. It may provide philosophical answers, simplify legal paperwork, and translate across languages. It is nearly hard to distinguish GPT-3 output from the human-written text for short chunks of text.

Is there another way to detect fake news?

Another used way to detect fake news is by analyzing the accuracy of the information in an article, regardless of whether a person or a machine wrote it. Services like; Snopes, Politifact, FactCheck, and others employ home editors to perform research and contact primary sources to verify a statement or an image. These businesses are also increasingly depending on artificial intelligence to help them sift through massive amounts of data.

How you write facts differs from how you write falsehoods. Researchers are using this to teach robots to distinguish between truth and fiction by training AI models on a corpus of April-Fool fake news pieces generated over 14 years.

A different method of verification involves awarding a reputation score to each news website. Only when websites with high reputation ratings approve a statement is it considered verified. The Trust Project, for example, assesses a news outlet’s reliability using characteristics such as ethical standards, sources and methodologies, corrections processes, and so on.

In addition to reporting possibly false articles, social media platforms have begun to take severe action against determined culprits. These steps include limiting the reach of accounts that spread false news or hate speech or even banning them entirely.

Facebook’s new ‘group quality’ feature is one example of this. It would be beneficial if social media platforms displayed the trust index of news sources with red-flag items labeled as fake.

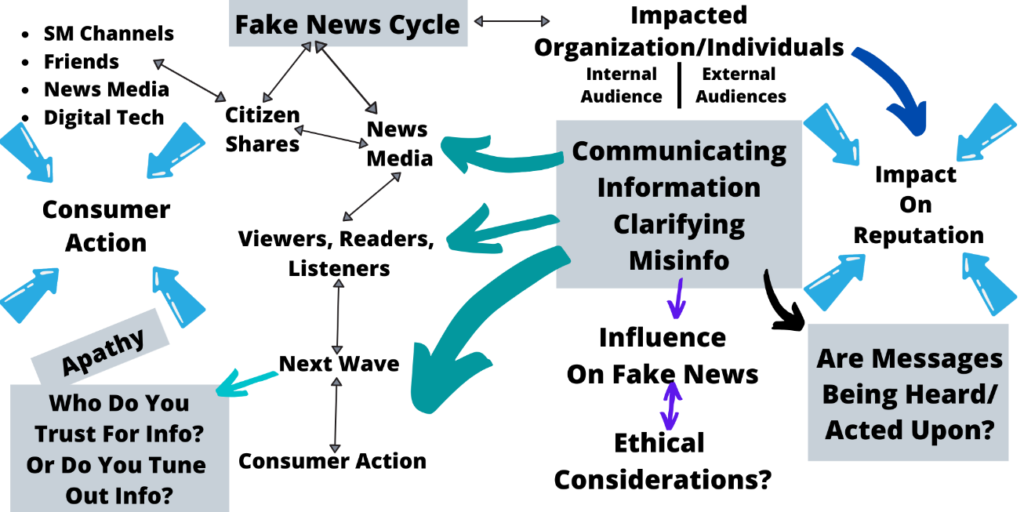

An Endless Cycle

However, such solutions imply that individuals who distribute fake news will not modify their tactics. They often change strategies, changing the substance of fake postings to make them appear more legitimate.

Using AI to analyze information might expose – and intensify – certain societal prejudices. It can be related to gender stereotypes, ethnic stereotypes, or local stereotypes. It may also have political ramifications, perhaps limiting the expression of certain opinions.

YouTube, for example, has stopped advertising on some sorts of video channels, costing their producers money. Context is equally important. The meanings of words can change throughout time. And the same word might have distinct meanings on liberal and conservative websites. For example, a post containing the phrases WikiLeaks and DNC on a more left-wing site may be more likely to be news, but it may allude to a specific set of conspiracy theories on a right-wing site.

Can The Loop Be Broken?

Fake news spread without being checked and confirmed, and its expansion fuels the need for more. According to a survey published by Statistica, 10% of respondents acknowledged by spreading a news report online that they knew was incorrect. In comparison, 49% shared the news that they subsequently discovered to be incorrect.

For the time being, all you can do is raise awareness about the spread of fake news. In other words, you must refrain from distributing such material to undermine its legitimacy.

Detecting false news is a complicated process that begins with education and awareness. You must validate the source. Fact-checked or peer-reviewed material usually consider being of high quality. It would be best to depend on insights that originate from credible sources or derive from respected research firms.

There are more individuals than ever before using the internet as their primary source of information. However, because this medium quickly contaminates with a wealth of erroneous information, you must carefully analyze and assess everything we acquire from internet sources.

Human Intelligence Is The Real Key

The most effective strategy to stop the spread of fake news may be to rely on people. The social implications of false information are significant: increased political polarization, increased partisanship, and lost faith in mainstream media and government.

Suppose more people realize the stakes were so high. In that case, they could be more doubtful of information, especially if it is emotional. Emotion is a powerful method to attract people’s attention.

When someone finds an upsetting message, it is advisable to study the content rather than share it right away. The act of sharing also gives legitimacy to a post: when other people read it, they note that it was shared by someone they know and presumably trust at least a little, and they are less likely to notice if the source is suspect.

Social media platforms such as YouTube and Facebook might voluntarily choose to label their material, explicitly indicating if a reliable source has verified an item claiming to be news.

Facebook may train AI through collaborations with news organizations and volunteers, constantly modifying the system to adapt to propagandists’ changes in themes and techniques.

It will not capture every bit of news released online, but it will make it easier for a significant number of people to distinguish between reality and fiction. It might lessen the likelihood of false and misleading tales becoming popular on the internet.

People who have had some exposure to factual news are better at discerning between honest and fraudulent information, reassuring. The idea is to ensure that at least part of what people see on the internet is accurate.

A Final Word

Can machine learning detect fake news? The short answer is “yes,to some extend.” By itself, data science/machine learning cannot effectively and accurately see whether or not news is fake. There are too many nuances and variables to consider. However, it can assist editors in identifying what is potentially fake news and speed up the process of fake news detection.

If you made this far in the article, thank you very much.

I hope this information was of use to you.

Feel free to use any information from this page. I’d appreciate it if you can simply link to this article as the source. If you have any additional questions, you can reach out to malick@malicksarr.com or message me on Twitter. If you want more content like this, join my email list to receive the latest articles. I promise I do not spam.

If you liked this article, maybe you will like these too.

Are Data Science and Machine Learning the same?

Machine learning in Cybersecurity? [in 2021]

Top 10 Exploratory Data Analysis (EDA) libraries you have to try in 2021.

SQL Data Science: Most Common Queries all Data Scientists should know

![Can Machine Learning Detect Fake News? [General Overview]](/images/articles/can-machine-learning-detect-fake-news/Fake-news-detection.png)